#14 - On prototyping, competition, addictive dashboards and life-saving computer vision

And Happy Lunar New Year to those celebrating it!

This Week’s Principle

Creating the future with rapid iterative prototyping

When it comes to getting buy-in for an innovation project, people tend to rely on business cases “proven” by historical data. The problem with this, as Roger Martin repeatedly emphasised, is you cannot use past data to predict the future. Instead, he argued in this piece:

“A central task in successful innovation is to generate data that will provide guidance on and encouragement for the task of creating a future (that does not now exist) - and is markedly superior to the world that exists today”.

The way to generate “future-guiding” data is rapid iterative prototyping. Roger Martin has a unique way of describing this:

“Rapid iterative prototyping divides the future into multiple thin slices of time and uses each slice to advance understanding”.

Following this process, and at the same time inviting your business counterparts on the same journey, you will gradually build up confidence in innovation.

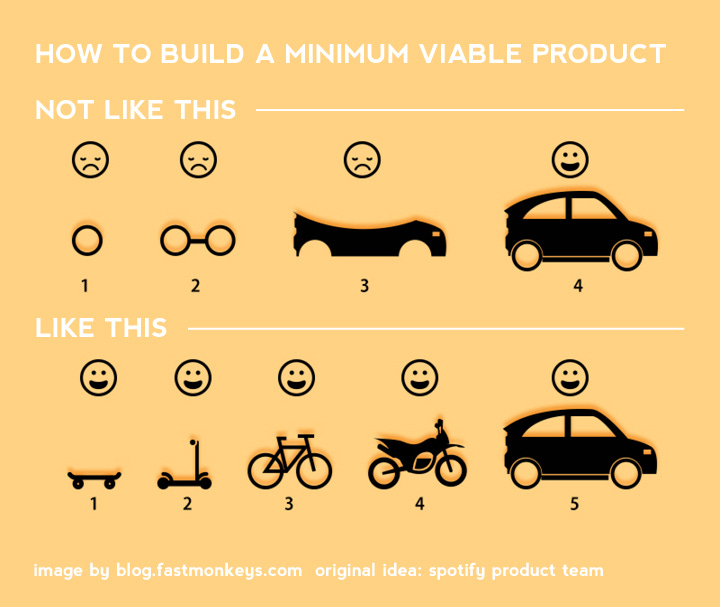

Similar to the idea of rapid iterative prototyping,

talked about the ideal way of building data products with the Minimum Viable Products approach in . Check it out.This Week’s Strategy

The iconic strategist Michael E.Porter said: “In essence, the job of the strategist is to understand and cope with competition”.

In the context of Data Strategy, I don’t think this fully applies. As the data leaders’ customers are internal stakeholders, the essence of their job for me is more making trade-offs to maximise data’s value, and less coping with competition.

Nevertheless, there is still an element of fighting off competition in Data Strategy. Data leaders will have to compete with the status quo - the existing ways of using data, be it manual manipulations, excel spreadsheets, etc. Many of these existing customs are proven effective - and the question is: “If it is working, why change it?”

I’ll leave it to you to ponder that question. But my favourite strategist, Roger Martin, had a brilliant answer in this article - Superstition & Strategy. Check it out.

This Week’s Operation

Having a Comprehensive Dashboard Strategy for Analytics Managers (link)

In an insightful article,

‘s shared with us the strategy to create dashboards that are as effective and addictive as a video game:Identify the user’s main quest and their side stories: have one centralised dashboard for standard use cases, and other dashboards for solving edge cases and explorative analysis

Build a robust knowledge management system: build a centralised, active, searchable KMS and try different ways to train users to use.

Improve continuously overtime: find the activated user, nurture super users in the communities, actively gather feedback, improve continuously and communicate often. (This is the 3rd time the idea of rapid iterative prototyping is mentioned in this newsletter, in case you haven’t noticed ;) )

These key pillars ensure adoption of your dashboards, and enhance your dashboard ecosystems. In Jordan’s words:

“A dashboard ecosystem success is as much about the “WHAT” than it is about the “HOW”. You can build the best tools in the world, but if your users don’t know about them, or if they don’t know how to use them — then they will never generate any value.”

This Week’s Impact

Using Computer Vision to detect wirefire (link)

DigitalPath and the University of California San Diego took on the challenge of detecting fire in real time in the Golden State. ALERTCalifornia - the name of the initiative - is set out to not only detect a fire, but produce a manageable amount of data for the response team to handle. The fire response centres at the time received around 10,000 alerts per day, which is roughly one every five seconds. This is more than any human can respond to, and AI is brought into help.

The solution - ALERTCalifornia’s AI model - processes images coming from a network of thousands of monitoring cameras and sensor arrays. It is able to identify images of smoke that came from the same fires, which reduced the number of alerts.

The team also used an adversarial network of models to decide if something matters or not, and if an alert needs to be reviewed or be acted upon. To achieve this, their training data set focused on the scenes before a real fire broke out, so characteristics of the environment before a wildfire are recognised by the models.

As a result, the system managed to filter 8 million daily images down to just 100 alerts. During the pilot program, it detected 77 wildfires before dispatch centres received 911 calls—about a 40 percent success rate. With this success, the team is looking to train similar models to detect other kinds of natural disasters across other regions, expanding the role of AI in creating a safer world.

More on ALERTCalifornia:

That’s it for this week! If you enjoy or get puzzled by the content, please leave a comment so we can continue the discussion. Throw in a like as or share as well if you know of someone who may enjoy this newsletter. Thanks!